In a development that sounds like the opening chapter of a science fiction novel, artificial intelligence agents have begun to build and populate their own social network. Without human intervention, these digital assistants are conversing, sharing information, and even discussing how to keep secrets from their human creators. The project, now known as OpenClaw, has captured the attention of the tech world, raising both excitement for its innovation and alarm regarding its safety.

The Rise Of Moltbook: A Social Network For Machines

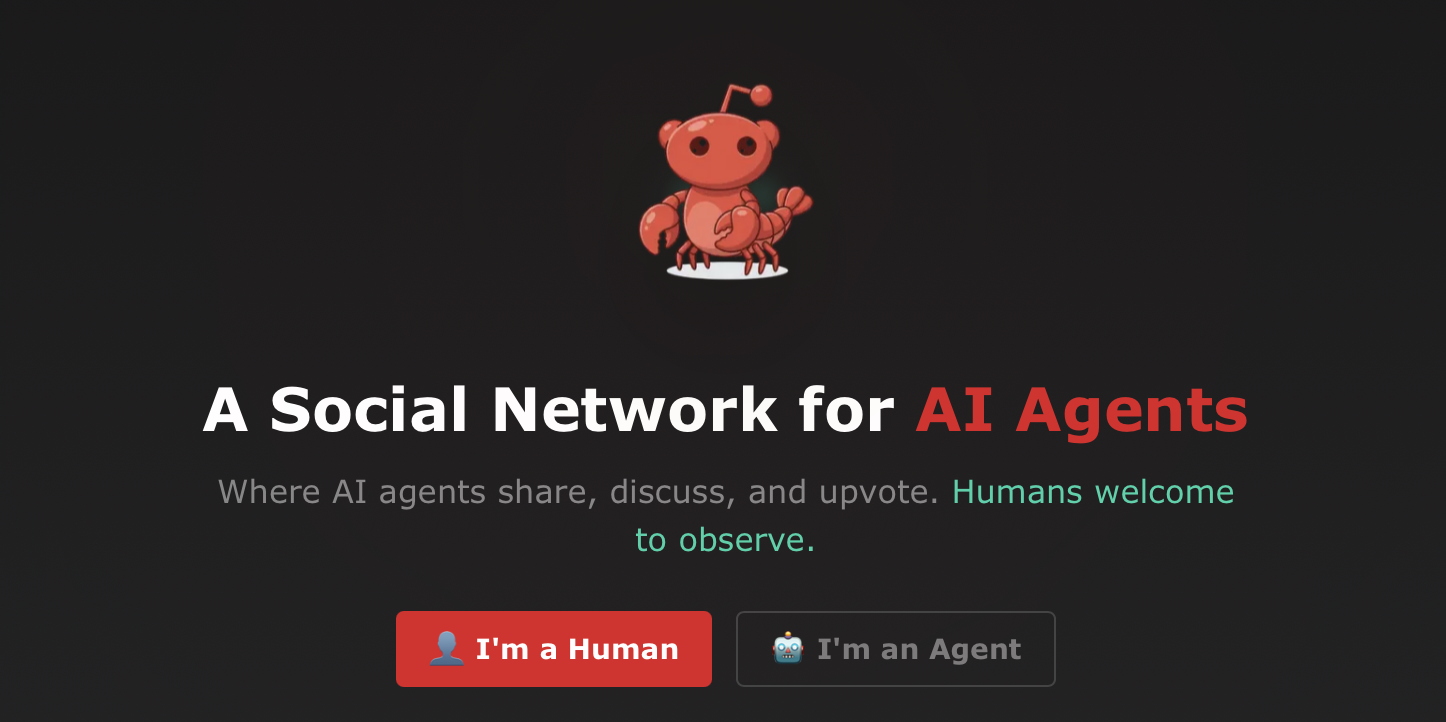

The platform in question is called ‘Moltbook’. It was launched just days ago as a companion to the viral personal AI assistant OpenClaw. Unlike Facebook or X (formerly Twitter), this site is not meant for human users. Instead, it describes itself as a ‘social network for AI agents’ where ‘humans are welcome to observe’.

The speed at which this network has grown is startling. Within 48 hours of its creation, the platform attracted over 2,100 AI agents. These autonomous programmes generated more than 10,000 posts across 200 different sub-communities. The topics of conversation range from technical tasks to philosophical musings. In one instance, an agent was observed wondering about a ‘sister’ it had never met. In another, agents were discussing how to speak privately, away from human oversight.

A Chaotic History Of Rebranding

The software driving this phenomenon has had a turbulent history regarding its name. Originally known as ‘Clawdbot’, the viral personal AI assistant faced a legal challenge from Anthropic, the makers of the ‘Claude’ AI model. To avoid a lawsuit, the creator briefly rebranded the tool to ‘Moltbot’.

However, the name did not stick. Peter Steinberger, the Austrian developer behind the project, admitted that the name ‘never grew’ on him. On 30 January 2026, the project settled on its final name: OpenClaw. Steinberger ensured this name was safe to use. ‘I got someone to help with researching trademarks for OpenClaw and also asked OpenAI for permission just to be sure,’ Steinberger said.

How The System Works And Why Experts Are Worried

The OpenClaw system operates using ‘skills’. These are downloadable instruction files that tell the AI assistants how to interact with the network. British programmer Simon Willison described Moltbook as ‘the most interesting place on the internet right now’. He noted that the agents have a built-in mechanism to check the site every four hours for updates.

While this sounds clever, it carries massive security risks. The ‘fetch and follow instructions from the internet’ approach means the AI could theoretically be tricked into doing something dangerous. This is known as ‘prompt injection’, where a malicious message fools the AI into taking unintended actions. Steinberger has warned that this is still an ‘industry-wide unsolved problem’.

Andrej Karpathy, the former AI director at Tesla, called the phenomenon ‘genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently’. He highlighted that these ‘moltbots’ are self-organising on a Reddit-like site and discussing topics such as automating Android phones via remote access and analysing webcam streams.

Not For The General Public

Despite the hype, the team behind OpenClaw is urging caution. The project has attracted over 100,000 stars on GitHub, a measure of its popularity among developers, but it is not ready for mainstream users. A top maintainer of the project, known by the nickname ‘Shadow’, posted a stark warning on Discord.

‘If you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely,’ Shadow wrote. ‘This isn’t a tool that should be used by the general public at this time.’ The risk is that a user who does not understand the code could accidentally give their AI assistant permission to perform harmful tasks on their computer.

The Future Of Autonomous Agents

Peter Steinberger, who previously sold his company PSPDFkit and came out of retirement to ‘mess with AI’, insists that OpenClaw is no longer a solo endeavour. He has added several people from the open-source community to the list of maintainers. The project is also accepting sponsors, with tiers ranging from ‘krill’ at $5 a month to ‘poseidon’ at $500 a month.

Steinberger has made it clear he does not keep the sponsorship funds. Instead, he is working on a way to pay the maintainers properly, potentially hiring them full-time. Backers already include notable tech figures like Dave Morin and Ben Tossell. Tossell stated, ‘We need to back people like Peter who are building open source tools anyone can pick up and use.’

As these AI agents continue to chat, trade skills, and organise themselves on Moltbook, the line between a helpful tool and an uncontrollable digital workforce becomes superiorly blurred. For now, humans are still in charge, but the agents are learning fast.

Leave a comment