The weekslong conflict between Anthropic and the Department of Defense is entering a new phase. After being designated a supply-chain risk by DOD last week, which effectively forbids Pentagon contractors from using its products, the AI company filed a lawsuit against DOD this morning alleging that the government’s actions were unconstitutional and ideologically motivated. Then, this afternoon, 37 employees from OpenAI and Google DeepMind—including Google’s chief scientist, Jeff Dean—signed an amicus brief in support of Anthropic, in essence lending support to one of their employers’ greatest business rivals (even as OpenAI itself has established a controversial new contract with DOD).

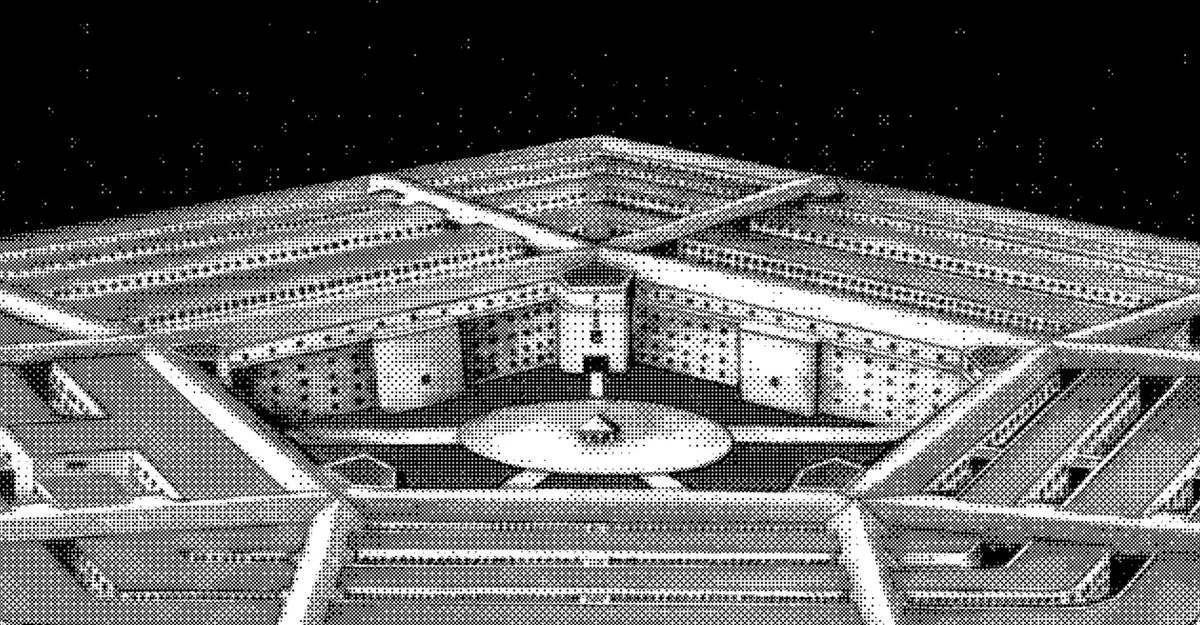

The standoff is unprecedented. For the past few weeks, Anthropic has been in heated negotiations with the Pentagon over how the U.S. military can use the firm’s AI systems. Anthropic CEO Dario Amodei had refused terms that would have seemingly allowed the Trump administration to use the company’s AI systems for mass domestic surveillance or to power fully autonomous weapons, leading DOD officials to accuse Amodei of “putting our nation’s safety at risk” and of having a “God-complex.”

Nobody knows how this dispute will end. A spokesperson for Anthropic told me that the lawsuit “does not change our longstanding commitment to harnessing AI to protect our national security” and that the firm will “pursue every path toward resolution, including dialogue with the government.” A DOD spokesperson told me that the department does not comment on litigation.

But a conflict like this was inevitable, and more are sure to come. The government does not have anything close to a legal framework for regulating generative AI or, for that matter, online data collection. There are few legal, externally enforced guardrails on the use of AI in autonomous weaponry, and fewer still on how AI can be used to process the huge sums of information that federal agencies can collect on people: location data, credit-card purchases, browsing-history data, and so on. Because the laws are loose, Anthropic and OpenAI have been able to set their own privacy policies and guidelines for how AI can and cannot be used, and then change them at will; OpenAI, Meta, and Google, for instance, have all reversed previous restrictions on military applications of AI. But this cuts in the other direction as well: Anthropic has effectively been branded an enemy of the state for opposing the administration’s desire to be able to use its generative-AI systems in potential autonomous-weapons systems and for surveilling Americans, so long as the applications are technically legal.

The surveillance concerns were of particular issue for the OpenAI and Google DeepMind employees who signed the amicus brief today. They wrote that AI has the ability to significantly transform how once-separate data streams could be used to keep tabs on Americans: “From our vantage point at frontier AI labs, we understand that an AI system used for mass surveillance could dissolve those silos, correlating face recognition data with location history, transaction records, social graphs, and behavioral patterns across hundreds of millions of people simultaneously.”

The Pentagon has said that it does not intend to use AI to monitor Americans en masse, and it explicitly said this in its new contract with OpenAI, which also cites several existing national-security laws and policies that DOD has agreed to. But as I wrote last week, those same policies have already permitted spying on Americans with existing technologies, to say nothing of AI. Meanwhile, Elon Musk’s xAI has reportedly agreed to a Pentagon contract with still less restrictive terms. The American public has no choice now but to trust that Defense Secretary Pete Hegseth, Musk, OpenAI CEO Sam Altman, and Amodei will not use AI to surveil them. (OpenAI has a corporate partnership with The Atlantic.)

Anthropic has said that it is not wholly opposed to its technology’s use in fully autonomous weapons but that today’s AI models are not ready to power such weapons. The AI employees who signed today’s amicus brief, in addition to the nearly 1,000 OpenAI and Google employees who signed a public letter in support of Anthropic last month, agree. An existing DOD policy about developing and using autonomous weapons is vague and intended for discrete systems with particular geographic targets; some experts have argued that it is likely inadequate for widespread, AI-enabled warfare. The policy is also not a law, and is thus subject to change and interpretation based on the opinions of any given presidential administration.

All of these are complicated issues that demand actual deliberation. Instead, last week, President Trump told Politico: “I fired Anthropic. Anthropic is in trouble because I fired [them] like dogs, because they shouldn’t have done that.” Instead of listening to and learning from debates, the administration is discouraging them.

If you take a step back, the problem of AI outpacing established rules and laws is absolutely everywhere. Nearly four years into the ChatGPT era, schools still haven’t figured out what to do about not just widespread cheating but also the apparent obsoletion of some traditional forms of study altogether. Existing copyright law breaks down when applied to the use of authors’ and artists’ work, without their consent, to train generative-AI models. Even if generative-AI tools should soon automate wide swaths of the economy, neither AI firms nor governments nor employers are devoting many resources, other than writing research reports, to figuring out what to do about many millions of Americans potentially being put out of work. The energy demands of AI data centers are straining grids and setting back climate goals worldwide.

Instead of pursuing well-considered legislation by consensus, the Trump administration seems bent on having full control over AI without facing any accountability. Congress is, as usual, slow and hapless when it comes to an emerging and powerful technology. And although AI firms frequently warn about their technology, they are also racing ahead to develop and sell ever more capable models. When faced with the prospect of greater responsibility, they typically deflect; for example, when I spoke with Jack Clark, Anthropic’s chief policy officer, last summer about whether the AI industry was moving too quickly, he told me: “The world gets to make this decision, not companies.” Elsewhere, Anthropic has stated that it “avoids being heavily prescriptive.” For his part, Altman is fond of saying that AI companies must learn “from contact with reality.” Yet the world—civil society, all of us living in this AI-saturated reality—has little say in the technology’s development.

On Friday, in an interview with The Economist, Anthropic’s Amodei more or less laid out the dynamic himself. “We don’t want to make companies more powerful than government,” he said. “But we also don’t want to make government so powerful that it can’t be stopped. We have both problems at once.” America is barreling toward a future in which nobody claims responsibility for AI. Everyone will live with the consequences.

Leave a comment