For decades, productivity gains in electronic design automation (EDA) came from better engines. Faster solvers, higher-capacity simulators, and more scalable formal tools allowed design and verification teams to keep pace as designs grew larger. That model is no longer sufficient.

Today’s design and verification bottleneck is not raw tool performance, but the coordination overhead required to plan, execute, interpret, and adapt verification across tightly coupled and constantly evolving workflows. Modern system-on-chip (SoC) designs require multiple clock domains, aggressive power management, embedded software, and late-stage architectural changes. Design and verification is no longer a linear activity that can be planned once and executed to completion. Instead, engineers spend much of their time steering runs, refining intent, responding to partial results, and reconciling coverage, failures, and design changes across tools and iterations. Productivity is increasingly constrained by process-level complexity rather than individual tool limitations.

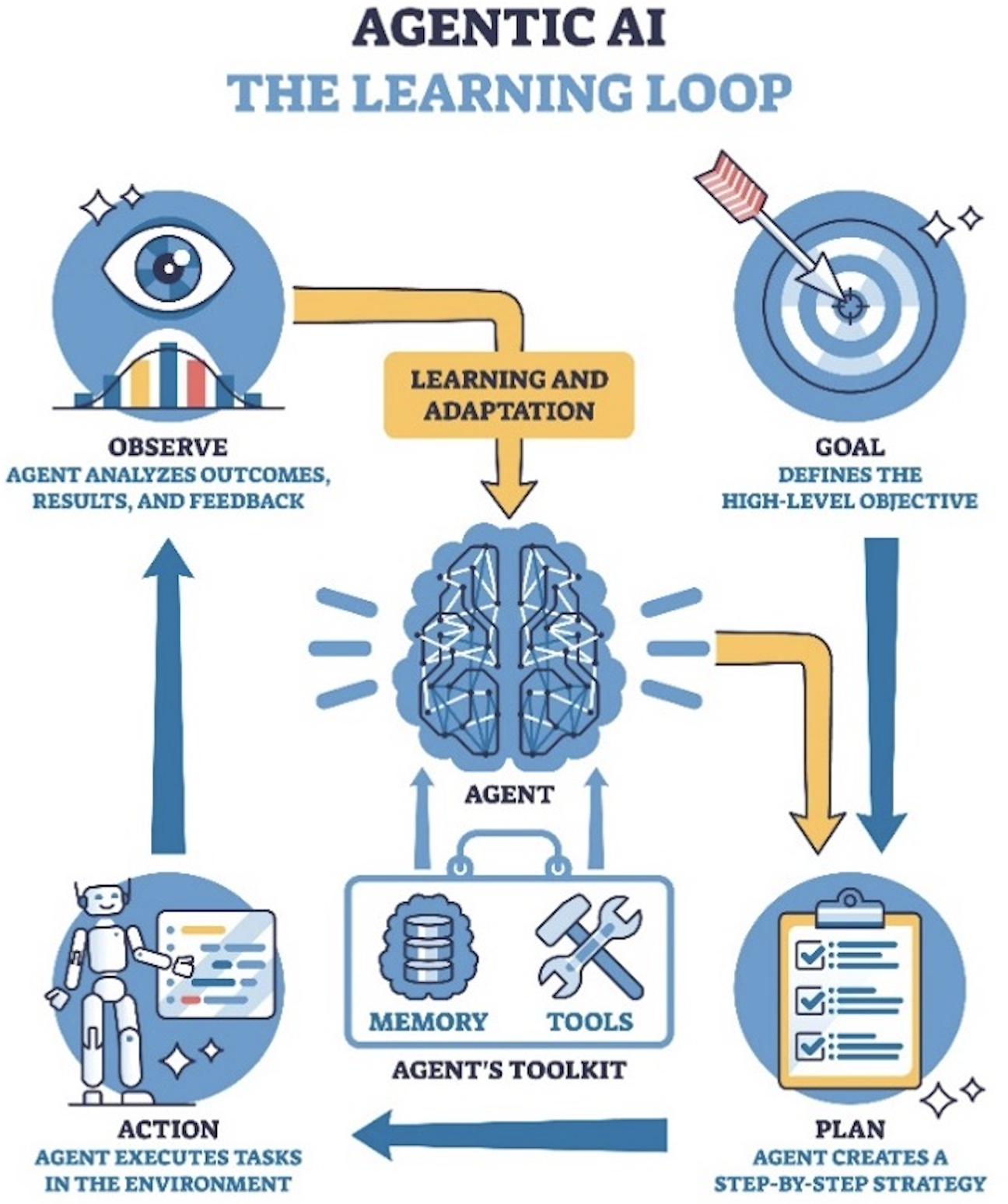

This shift is driving interest in agentic AI for EDA. Rather than optimizing isolated steps, agentic approaches embed intelligence directly into multi-step workflows, helping engineers manage intent, context, and adaptation across design and verification. The key challenge is doing so without undermining trust, accountability, or sign-off rigor.

From tool automation to workflow intelligence

Traditional automation assumes stable inputs and predominantly linear flows. However, the design and verification reality is different. Inputs evolve as designs change, specifications are refined, and intermediate results expose new risks. Experienced engineers continuously reinterpret results and adjust strategy, often across multiple tools and domains.

Agentic EDA addresses this mismatch by introducing goal-driven software agents that can observe verification state, plan bounded actions, execute tasks, and summarize outcomes across iterations. The objective is not to replace engineers, but to reduce manual coordination overhead and allow experts to focus on judgment, prioritization, and risk assessment.

Crucially, agentic systems are most effective when they operate at the workflow level, rather than as external wrappers around tools. Without deep integration, AI systems are limited to parsing logs or generating inputs after-the-fact, which increases the review burden and reduces confidence. Engine-native integration allows AI-generated actions to be evaluated using the same semantics, coverage models, and checks used for sign-off.

Human-in-the-loop by design

Despite rapid advances in large language models and reasoning systems, full autonomy is neither practical nor desirable for RTL sign-off. Design and verification decisions routinely involve incomplete specifications, implicit assumptions, and tradeoffs that require human judgment. Knowing when results are “good enough” is often contextual and risk-based.

For this reason, modern agentic design and verification must be explicitly human-centered. Agents should propose actions, execute well-defined tasks, and bring insights to the surface, while engineers retain decision authority at all sign-off-critical points. Human review is not a temporary limitation of AI maturity; it is a deliberate architectural choice to preserve accountability, trust, and verification integrity.

In practice, this means embedding explicit approval checkpoints into agentic workflows. Any action that affects design and verification intent, execution scope, or closure criteria requires human confirmation. AI accelerates the mechanics of planning, execution, and analysis, but engineers remain firmly in control.

An architectural foundation for agentic verification

Delivering trustworthy agentic workflows requires more than adding AI features to individual tools. It requires an architectural foundation that exposes design and verification engines to agentic frameworks in a structured and semantically meaningful way.

The Questa One Agentic Toolkit extends Siemens Questa One design and verification portfolio with model context protocols (MCP). These engine-native interfaces allow agentic systems to invoke tools, retrieve results, and observe verification states safely and predictably. Rather than relying on unstructured text interactions or brittle scripting, MCPs provide curated workflow entry points, such as running simulations, querying coverage, updating verification plans, or analyzing failures.

Because Siemens owns the underlying engines, these interfaces can evolve in lockstep with expanding tool capabilities and expose internal states that external wrappers cannot reliably access. This engine-native context is paired with persistent awareness of relationships between designs, testbenches, assertions, verification plans, and historical outcomes. As a result, agents can reason across iterations rather than treating each action as an isolated request.

Equally important is openness. Design and verification environments are rarely homogeneous, and customers have strong preferences around infrastructure and AI frameworks. While the Questa One Agentic Toolkit is optimized for Siemens’ enterprise-level agentic framework, Fuse EDA AI, it is designed to be framework-agnostic, supporting integration with third-party agentic orchestration environments through standardized interfaces.

Agentic workflows in practice

The current release of the Questa One Agentic Toolkit focuses on human-directed assistance across RTL-centric workflows. Five intelligent agents illustrate how agentic AI can reduce friction while preserving rigor.

- RTL Code Agent: Design creation is increasingly constrained by the need to maintain alignment between architectural intent, coding practices, and downstream verification expectations. The RTL Code Agent generates synthesizable RTL from natural-language descriptions, while simultaneously applying verification-aware checks. Generated code is qualified using lint, static analysis, and structural checks aligned with established coding standards. Potential issues are surfaced immediately for engineering review, improving initial RTL quality and reducing downstream rework.

- Lint Agent: Configuring lint analysis effectively often requires deep tool expertise and intimate knowledge of design intent. The Lint Agent reads existing RTL, optimizes lint configuration, and analyzes results in context. Engineers can review findings and choose to apply AI-suggested fixes or waivers, ensuring that automation accelerates cleanup without masking real design issues.

- CDC Agent: Clock domain crossing verification is a persistent source of risk in modern SoCs. The CDC Agent configures and runs CDC analysis, then evaluates results to suggest configuration refinements. By iterating intelligently and summarizing outcomes, the agent helps engineers converge on clean asynchronous designs more efficiently, while keeping control firmly in human hands.

- Verification Planning Agent: Verification planning is a prime example of process-level complexity. Specifications evolve, features are refined, and plans must adapt as execution progresses. The Verification Planning Agent analyzes design specifications to generate structured, machine-readable verification plans. It identifies features, proposes test strategies and coverage objectives, and presents each stage for review. Approved plans are written directly into the verification environment and evolve alongside the design.

- Debug Agent: Debugging consumes a disproportionate share of verification effort. The Debug Agent correlates waveforms, assertions, coverage data, and logs across runs to identify recurring failure signatures and suspect signals. Rather than replacing engineer intuition, it accelerates root-cause analysis by surfacing evidence-backed hypotheses and suggesting targeted follow-up actions.

- Across these agents, common characteristics emerge: engine-qualified execution, persistent contextual awareness, bounded actions with explicit approval, and intelligence that spans multiple steps and iterations. Together, they enable meaningful productivity gains without eroding trust.

Trust, safety, and sign-off integrity

Agentic systems introduce new considerations for reliability and risk management. Unchecked automation can amplify errors or obscure intent. The toolkit addresses these concerns through architectural constraints rather than policy overlays.

Bounded agent actions limit the scope of automated behavior. Engine-qualified validation ensures that AI-generated artifacts are evaluated using production-grade design and verification engines. Structured prompts and curated interfaces reduce ambiguity. Most importantly, human review is enforced at decision-critical stages.

This approach aligns with the realities of sign-off, where accountability ultimately rests with engineering teams. Agentic AI becomes a force multiplier for expertise rather than a substitute for it.

Looking ahead

Agentic design and verification is still evolving. Today’s focus is on human-directed workflows that reduce friction in planning, execution, and analysis. Over time, greater orchestration may become feasible, but only if introduced transparently and with robust validation.

The long-term objective is not to remove engineers from the loop, but to allow them to spend more time on intent, judgment, and risk management—and less time on manual coordination. As designs continue to grow in complexity, that shift may prove as important as any incremental improvement in solver performance.

For design and verification teams grappling with exploding iteration overhead, human-centered agentic AI offers a pragmatic path forward: faster progress, preserved rigor, and trust by design.

For a deeper dive into agentic AI, please see my new paper, Human-centered agentic AI workflows for RTL verification.

Leave a comment