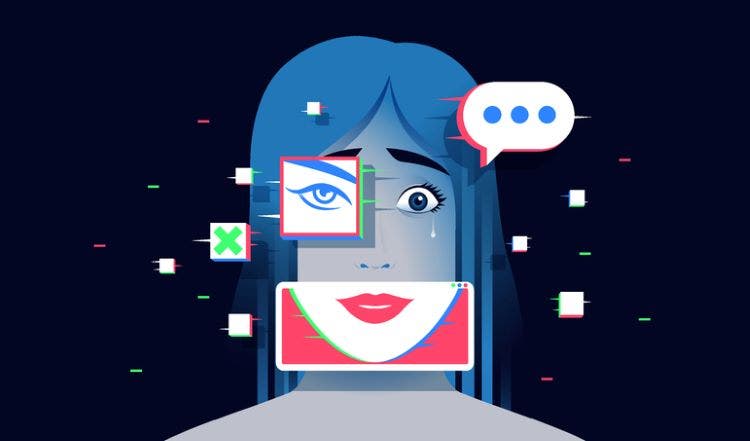

AI fakes do not escape data protection law

The ICO has warned that AI-generated deepfake images of real people without consent are subject to data protection laws, urging robust safeguards and accountability from tech firms.

Britain’s privacy watchdog has issued a warning to technology firms over the rapid rise of AI-generated images depicting real people without their consent.

In a joint statement [pdf] published on Monday, the Information Commissioner’s Office (ICO) joined more than 60 data protection and privacy authorities worldwide to raise concerns about the misuse of AI tools capable of generating convincing images and videos of identifiable individuals.

The signatories of the joint statement cautioned that companies cannot sidestep data protection law simply because a machine produced the content.

While acknowledging that AI can deliver “meaningful benefits for individuals and society”, the regulators said recent advances – particularly image and video generation tools embedded within widely used social media platforms – have fuelled a surge in non-consensual intimate images, defamatory portrayals and other forms of harmful content.

“We are especially concerned about potential harms to children and other vulnerable groups, such as cyberbullying and/or exploitation,” the signatories said.

The statement amounts to a clear warning: if an AI system can convincingly fabricate a real person, organisations cannot claim that existing privacy and data protection laws no longer apply.

It comes weeks after the ICO, and other regulators launched formal investigations into xAI following reports that its Grok chatbot had generated sexualised images of real individuals without their consent.

The probes signalled an emerging willingness among regulators to test the boundaries of AI accountability in real time.

Following public criticism from victims, online safety advocates and politicians, xAI later stated that it had intervened to restrict access to paying users.

At the heart of Monday’s statement is a call for firms developing genAI systems to embed safeguards at the design stage, rather than treating safety as an afterthought.

Regulators warned that the technology has raced ahead of social norms.

Among the core principles set out are requirements to implement robust technical and organisational measures to prevent the misuse of personal information; to ensure meaningful transparency about AI systems’ capabilities, acceptable uses and the consequences of misuse; and to provide accessible, effective mechanisms enabling individuals to request the swift removal of harmful content.

Companies are also urged to address specific risks to children by introducing enhanced protections and providing age-appropriate information to children, parents and educators.

William Malcolm, the ICO’s executive director of regulatory risk and innovation, said the public should not have to choose between technological progress and personal safety.

“People should be able to benefit from AI without fearing that their identity, dignity or safety are under threat,” he said.

“AI already plays a large role in all our lives, and everybody has a right to expect that AI systems handling their personal data will do so with respect.”

Malcolm added that public trust was “foundational to the successful adoption and use of AI”, arguing that coordinated regulatory action across borders was intended to provide both high standards of protection and clarity for industry.

The message from regulators is clear. Firms that deploy systems capable of fabricating a person’s likeness are expected to demonstrate that privacy and safety were considered from the outset.

Where obligations are not met, the ICO warned, enforcement action will follow.

Leave a comment