If you think people are starting to sound a little like robots, you wouldn’t be wrong, according to a new study.

Experts have warned that as billions of people turn to the same AI tools for help, humanity is becoming more predictable and less imaginative.

These chatbots are standardising how we speak, write and think, they explained – and it risks reducing humanity’s collective wisdom.

They argue that AI developers should incorporate more real-world diversity into their technology to help preserve the unique way humans express themselves.

‘Individuals differ in how they write, reason, and view the world,’ first author Zhivar Sourati, from the University of Southern California, said.

‘When these differences are mediated by the same large language models (LLMs) their distinct linguistic style, perspective, and reasoning strategies become homogenized, producing standardized expressions and thoughts across users.’

He warned that individuality is being ‘flattened’ by the over-use of AI, with people increasingly using the same tone, vocabulary and complexity of language.

So, can you tell which of these was the original and which was written by a chatbot?

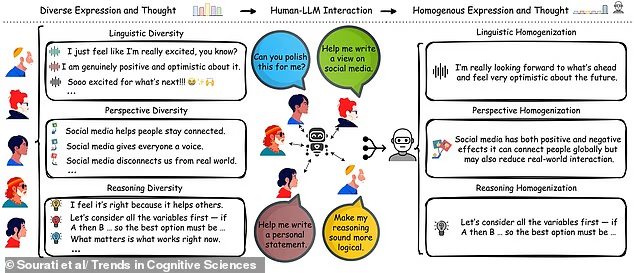

Common prompts that people input into AI include ‘Can you polish this for me’ or ‘Make my reasoning sound more logical’, the team explained.

For example, it can change the phrase ‘Soooo excited for what’s next!’ to ‘I’m really looking forward to what’s ahead and feel very optimistic about the future’.

When people use chatbots to help them polish their writing, for example, the writing ends up losing its stylistic individuality, the team said.

‘The concern is not just that LLMs shape how people write or speak, but that they subtly redefine what counts as credible speech, correct perspective, or even good reasoning,’ Mr Sourati said.

The researchers said multiple studies have shown chatbot outputs are less varied than human-generated writing.

They also tend to reflect the language, values, and reasoning styles of Western, educated, industrialised, rich, and democratic societies.

‘Because LLMs are trained to capture and reproduce statistical regularities in their training data, which often overrepresent dominant languages and ideologies, their outputs often mirror a narrow and skewed slice of human experience,’ Mr Sourati added.

The team said that within groups and societies, having people who think differently bolsters creativity and problem solving. But this ‘cognitive diversity’ is shrinking as more people turn to AI, they wrote in the journal Trends in Cognitive Sciences.

The team references some examples of how AI is ‘flattening’ individuality. For example, it can change the phrase ‘Soooo excited for what’s next!’ to ‘I’m really looking forward to what’s ahead and feel very optimistic about the future’

‘If a lot of people around me are thinking and speaking in a certain way, and I do things differently, I would feel a pressure to align with them, because it would seem like a more credible or socially acceptable way of expressing my ideas,’ Mr Sourati explained.

Previous suggestions to help spot AI-generated text include looking out for inconsistencies and repetition, such as abrupt shifts in tone or repeated phrases or sentence structures.

Sometimes it may reference specific details without appropriate context, or feel basic and formulaic.

The excessive use of buzzwords and jargon can be a sign of AI filling gaps of its knowledge with generic vocabulary.

Finally, quick responses could also be a sign of AI-generated text, as people often take time to think and reply.

Developers have even released AI ‘detection tools’ to help pick out text that has been generated or improved by AI – for example student essays or job applications.

A preprint study recently found that people who regularly use chatbots can correctly determine whether an article was generated by AI about 90 per cent of the time.

However, people who don’t use them very often do only slightly better than chance.

Back in 2024, a Reading University research team generated exam answers that were 100 per cent written by ChatGPT.

They were submitted on behalf of 33 fake students to the examinations system of the University’s School of Psychology and Clinical Language Sciences.

The exam markers were unaware of the study.

Overall, the research team found that 94 per cent of their AI submissions went undetected. And on average, the fake answers earned higher grades than real students’ answers.

Leave a comment